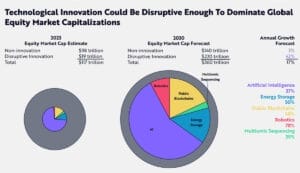

Catalysed by breakthroughs in artificial intelligence (AI), the equity market value associated with disruptive innovation could soar from 16 per cent of global stockmarket capitalisation to more than 60 per cent by 2030, according to Ark Invest’s Big Ideas 2024 report.

The annualized equity return from disruptive innovation could exceed 40 per cent over the rest of the decade, increasing its market capitalization from US$19 trillion ($29 trillion) today to about US$220 trillion by 2030.

The Florida-based investment manager believes “convergence among disruptive technologies will define this decade. Five major technology platforms—AI, public blockchains, multiomic sequencing, energy storage and robotics—are coalescing and should transform global economic activity”.

Tonal sentiment

Goldman Sachs Asset Management believes asset owners can capitalise on this explosive growth not just by deploying capital directly into AI-related opportunities but also by “evaluating how AI breakthroughs are helping to enhance investment management processes and investment decision-making”.

In its recent Dual Dynamics: Investing In And With Artificial Intelligence note Goldman Sachs mentions new AI tools including large language models programmed to analyze tonal sentiment during earnings calls.

This means “signals detecting not just ‘what management is saying’ but “how management is saying it’”, according to Goldman Sachs said.

“Management that speaks with characteristics of confidence in their tone, for example, can implicitly be expressing a positive outlook for their company. Evasiveness of speech may correlate with the intent to avoid drawing attention to poor firm prospects,” the note said.

Westworld

The combined message from Ark and Goldman Sachs to asset owners seems to be that it won’t be long until the majority of their portfolios are invested in AI-related companies – and that they will also be relying on AI to help choose what AI stocks to invest in.

Whether AI software might have – or be able to teach itself to have – a bias towards AI stocks (and away from non-AI stocks) is unclear.

In any event, one is reminded of the poster tagline for the 1973 film sci-fi film Westworld – an amusement park “where nothing can possibly go worng.”

The Magnificent Seven

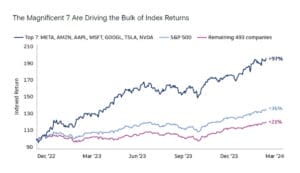

While on the subject of famous films, we may have already had a glimpse into this future with the AI-fuelled surge in global technology stocks led by the so-called Magnificent Seven – Alphabet, Amazon, Apple, Meta Platforms, Microsoft, NVIDIA and Tesla.

The seven collectively drove 70 per cent of the absolute performance of the Nasdaq Composite Index in 2023. The S&P 500 index returned 26 per cent in 2023 but without the Magnificent Seven, the index would have risen only 8 per cent.

However, the seven pose a conundrum for ESG-conscious investors. Most of them don’t have great ESG credentials.

Morningstar’s Global Markets Sustainability Index for example, excludes Tesla, Meta, Alphabet and Amazon on various ESG grounds. However, excluding these stocks from your portfolio comes with the risk of lower returns.

This is all very relevant to retirement savings of Australians as our biggest super funds are increasingly investing in global technology stocks.

For example, the largest five US shareholdings of AustralianSuper, UniSuper and C-Bus are all cast members of the Magnificent Seven.

Not the Australian way

The Australian Government is currently embroiled in battles with involving two Magnificent Seven identities Leon Musk and Mark Zuckerberg.

Last month when Musk’s X refused to comply with court orders to globally remove violent footage. Australian Prime Minister Anthony Albanese described him as” arrogant billionaire who thinks he’s above the law, but also above common decency”.

Senator Jacquie Lambie said Musk, who is also Tesla’s CEO, was “an absolute friggin’ disgrace” who should be jailed.

When Zuckerberg’s Meta, the owner of Facebook, announced it would not renew its existing deals to pay Australian publishers for news content Albanese said “the idea that one company can profit from others’ investment – not just investment in capital but investment in people, investment in journalism – is unfair. That’s not the Australian way”.

Federal Assistant Treasurer Stephen Jones said “Meta seems more determined to remove journalists from their platform than criminals”.

Does all this mean ESG-conscious asset owners should be reconsidering their investments in companies run these billionaires – or can all this be ignored as just more political posturing?

Absence of alignment

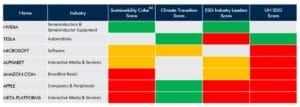

Copenhagen-based asset manager Qblue Balanced recently analysed the Seven, considering three dimensions – Climate Transition, ESG Industry Leaders and UN Sustainable Development Goal (SDG) Alignment.

Qblue found “these companies often only excel in one or two dimensions, if any, while performing poorly in others”.

Qblue said a big issue was the absence of “alignment” between “corporate practices” and corporate policies and initiatives.

There were often controversies around “labour management and practices, human capital development, and product safety and quality, all of which directly impact several SDGs and the ‘S’ in ESG. Additionally, governance-related controversies, such as anticompetitive practices, are frequently observed among these companies”.

Semiconductor maker NVIDIA was the only company that had a good record across the board.

Responsible AI and ESG

So how do asset owners traverse this?

Last month CSIRO and Alphinity Investment Management published The intersection of Responsible AI and ESG: A Framework for Investors to help asset owners and managers assess the ESG implications of the design, development and deployment of AI.

“ESG ambitions are a proxy for good AI management. Because AI is evolving so rapidly, good leadership on existing topics like cyber, diversity and employee engagement is a proxy that the impact of AI will also be considered thoughtfully,” CSIRO and Alphinity said.

Company interviews were done as part of the framework’s development. Worryingly, “human rights and modern slavery were not identified as concerns in the interviews. With one of the core AI ethics principles focusing on human rights, this topic remains critical, yet underexplored in the AI space,” they said.

AI and Human Rights Investor Toolkit

The Responsible Investment Association Australasia (RIAA) ASIA) has also just released its Artificial Intelligence And Human Rights Investor Toolkit.

The RIAA says potential benefits of AI for society and companies are immense but at the same time “there is growing awareness about the risks, some potentially catastrophic, posed by AI when inadequately designed, inappropriately or maliciously deployed or overused”.

The RIAA highlighted the “inadequate consideration of adverse human rights impacts when new products and services using AI are deployed, often in a bid to gain the first mover’s advantage in the market.

“In human rights terms, AI poses risks of bias and exacerbates systemic discrimination of individuals, particularly those from historically marginalised communities. AI can increase system vulnerability to cyber-attacks resulting in privacy violations at scale. AI can facilitate targeted attacks on vulnerable groups, particularly children, in addition to other human rights abuses”.

No human rights offset

The United Nations Guiding Principles on Business and Human Rights (UNGPs) clearly state that human rights due diligence should only consider the adverse human rights impacts from business activities.

A key reason for this is that “including both adverse and positive human rights impacts runs the risk of the human rights due diligence offsetting the negative impacts with positive contributions elsewhere, which the UNGPs make clear is not acceptable,” the toolkit says.

The UNGPs say “Business enterprises may undertake other commitments or activities to support and promote human rights, which may contribute to the enjoyment of rights. But this does not offset a failure to respect human rights throughout their operations”.

The B-Tech Project, which provides guidance on implementing the UNGPs in the technology space, says focusing on human rights gives companies, regulators and civil society a well-established list of impacts against which to assess and address the impacts of generative AI systems.

“Human rights are connected to at-risk stakeholders’ lived experience, and are also more specific than terms often used to describe the characteristics of generative AI systems, including ‘safe’, ‘fair’, ‘responsible’ or ‘ethical’,” according to B-Tech’s Taxonomy of Human Rights Risks Connected to Generative AI.

With AI, “human rights impacts are often heightened for groups or populations already at elevated risk of becoming vulnerable or marginalised, including women and girls,” according to the taxonomy.

B-Tech gives examples such ‘deepfake pornography’, the creation of child sexual abuse material and generative AI being used to facilitate human trafficking. In all these cases, women and girls are at heightened risk.

Investing in AI scamming

Meanwhile, one of the world’s most successful investors Warren Buffet is concerned that AI deepfakes will dramatically enable even more effective financial scams.

Referring to a deepfake video of himself, Buffet told Berkshire Hathaway’s recent annual meeting that it was so convincing that he could have “sent money to myself over in some crazy country”.

The Berkshire chairman said “If I was interested in investing in scamming, it’s going to be the growth industry of all time”.

Leave a Comment

You must be logged in to post a comment.